The Myth of QA’s Demise

“Is QA dead?” It’s a provocative question that seems to surface every few years, usually whenever a new testing tool emerges or a buzzworthy technology takes center stage.

Today, in July 2025, the rise of AI in software development has revived that question with fresh intensity. But the truth is more nuanced: QA as a gated, siloed function may have “died,” but quality assurance itself has been reborn—deeper, broader, and more strategic than ever before.

For decades, QA teams occupied a well-defined corner of the SDLC: plan your tests up front, execute them near the end, file bugs, and rinse and repeat. In that model, testers were the “last line of defense” before a release—and a common target for headcount cuts or offshoring when budgets tightened. Headlines declaring “QA Is Dead” or “Testing Will Be Fully Automated by 2025” have proliferated, stoking anxiety among testers everywhere.

But those headlines miss the point. What’s truly died is the notion of QA as a separate, isolated function. Today, quality lives everywhere—embedded in planning, coding, infrastructure, and monitoring. We’ve transitioned from gated-release quality checks to continuous, circular feedback loops that intertwine development and validation at every step. In that sense, QA has not vanished; it has been woven into the fabric of how products are built.

AI as a Quality Augmentor

The most transformative force in modern QA is undoubtedly AI. Yet rather than rendering human testers obsolete, AI is amplifying what we can achieve—and elevating the human role in the process. Let’s explore three key ways AI is augmenting quality assurance:

1. Automated, Intelligent Test Generation

Traditional test-writing can be laborious: map requirements to test cases, handle UI locators or API specifications, and keep suites up to date as applications evolve. AI-driven test frameworks upend that paradigm by automatically generating and adapting test cases in response to code changes. Instead of crafting every step manually, teams feed their application model into an AI engine that suggests end-to-end scenarios, edge-case combinations, and data variations.

Crucially, these AI agents can continuously learn from test results—identifying high-risk areas that warrant deeper coverage and pruning redundant checks elsewhere. The outcome? Broader test coverage with a fraction of the maintenance overhead, freeing QA engineers from rote scripting and letting them focus on strategy.

2. Self-Healing Pipelines

One of the biggest drains on QA productivity is maintenance: UI tweaks break locators, API versions shift, tests drift out of sync. Enter self-healing scripts. Powered by machine learning, modern frameworks detect minor changes—say, a button’s CSS class or an endpoint’s query parameter reorder—and automatically adjust the test logic. When a locator fails, AI can scan the surrounding DOM, infer the updated selector, and patch the test on the fly.

The result is a dramatic drop in false failures and pipeline outages. Teams spend less time firefighting broken builds and more time designing new tests, refining metrics, and collaborating on quality goals.

3. Real-Time, Distributed Validation

As microservice architectures and cloud-native deployments become the norm, end-to-end testing at scale poses unique challenges. Coordinating dozens of services, databases, and UX flows can bog down release cycles—unless you leverage AI orchestration.

AI test directors can spin up parallel clusters of test environments, distribute workloads intelligently across nodes, and adapt test suites based on real-time performance metrics. Imagine thousands of tests running in seconds, each guided by an AI agent optimizing for speed, stability, and coverage. The old trade-off between depth and velocity no longer applies: you get both, continuously, throughout development.

The New Roles: AI Test Directors and Quality Architects

With AI handling scale, repetition, and low-level maintenance, human experts are migrating upstream:

- Quality Architects define the overall testing strategy, shape AI training data, and ensure that tests align with business risk.

- AI Test Directors monitor model health, fine-tune algorithms for reliability, and interpret AI-generated insights for compliance and security.

- Developer-QA Collaborators partner on shift-left initiatives—developers write unit tests and integration checks, while QA engineers guide AI to cover cross-cutting scenarios and non-functional requirements.

This elevation of skill sets not only protects QA roles but makes them more essential. According to U.S. Bureau of Labor statistics, demand for QA analysts is projected to grow 17% from 2023 to 2033—faster than the national average for all occupations. Many of those openings will be in AI-enhanced quality functions, not in mundane test execution.

Tackling the Challenges

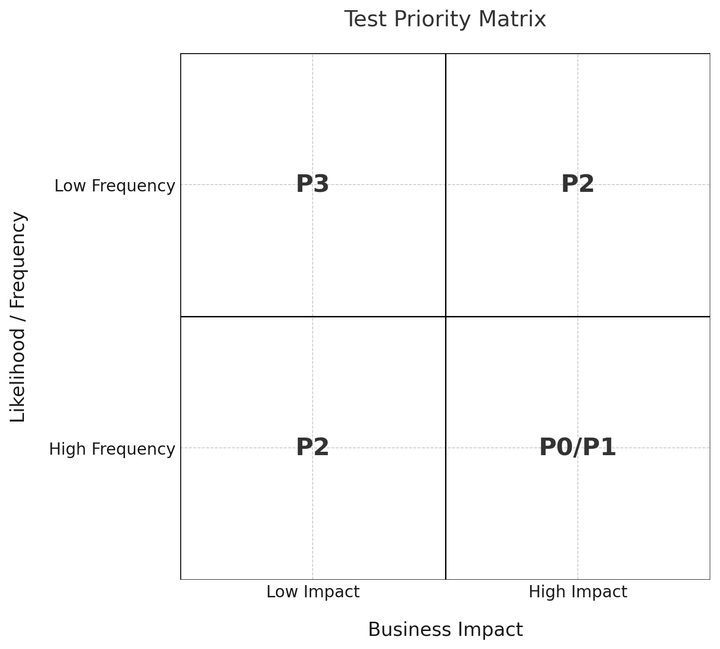

Of course, integrating AI into QA isn’t a silver bullet. Teams must navigate three critical challenges:

- Explainability & Trust

AI models can feel like black boxes. Regulators, security auditors, and executive stakeholders require clear audit trails: Why did the AI generate this test? How was that risk threshold determined? Building transparency into AI-powered QA pipelines—through detailed logging, versioned training datasets, and human-in-the-loop checkpoints—is non-negotiable. - Data Quality & Bias

Garbage in, garbage out holds doubly true for AI. If your test-generation models train on skewed or incomplete datasets, they’ll perpetuate blind spots—missing edge cases or masking critical defects. Continuous data governance practices, periodic model retraining, and cross-functional review boards help maintain dataset integrity and coverage balance. - Balancing Automation with Human Judgment

AI excels at pattern recognition and repetition, but it can’t replace human intuition for creative, exploratory testing. Complex UX flows, nuanced accessibility reviews, and performance-tuning often demand human empathy and domain expertise. The most resilient QA strategies blend AI automation with deliberate human oversight, ensuring that no critical scenario is left unexamined.

Looking Ahead

Quality assurance in 2025 is neither dead nor obsolete—it’s evolving into a hyper-connected, AI-augmented practice that spans the entire SDLC. The old QA milestones—unit test phase, integration check, system test gate—have dissolved into a continuous loop of design-validate-learn. AI handles the repetitive heavy lifting, while human engineers guide strategy, ethics, and innovation.

For QA professionals, the message is clear: embrace AI as your ally. Invest in data literacy, learn to interpret model outputs, and hone your skills in areas where humans still outshine machines—like creative test design, risk analysis, and stakeholder communication. Organizations that seize this opportunity will unlock unprecedented velocity and reliability. Those that cling to outdated QA silos risk irrelevance.

Ultimately, QA isn’t dying; it’s being reborn. In the synergy of AI and human expertise lies the future of software quality—faster, smarter, and more resilient than ever before.

Comments ()